Zeever.ca: A Low-Budget Experiment in Sovereign Canadian AI

Canada has committed billions to sovereign AI.

But if you actually try to build something today, the experience looks very different.

Meanwhile, a different reality is taking shape globally, particularly in China. AI systems are being built on lower-cost hardware, with optimized models designed for efficiency over scale. Not every solution runs on massive clusters. Many run on constrained infrastructure, and they're built for it.

Zeever.ca was inspired by that approach.

The Setup: Built on What Already Exists

This wasn't a greenfield build.

The system is intentionally constrained:

- Older desktop with an RTX 3070 (8GB VRAM): for local inference

- Existing VPS with no GPU: set up years ago to host my websites

- Tailscale connection: linking the two environments

No new infrastructure. No specialized AI hosting. Just repurposing what was already available.

That constraint shaped every decision.

The Goal

Zeever is an experiment. Not in theory, but in practice.

What does "sovereign Canadian AI" look like if you try to build it yourself with limited resources?

No grants. No hyperscaler contracts. No supercomputer access. Just public Canadian data, open models, and modest infrastructure.

Stage 1: Grounding in Real Canadian Data

The experiment starts with City of Toronto (Toronto.ca) data.

The goal: build a system that can answer real municipal questions using Canadian data.

Stage 2: Data Structures, Efficiency Over Scale

Taking cues from the efficiency-first approaches seen in parts of the Chinese AI ecosystem, multiple strategies were tested:

- Raw scraping: baseline ingestion

- Chunked RAG: standard retrieval patterns

- Structured extraction: cleaner, typed outputs

- Early GraphRAG-style representations: relationship-aware retrieval

Key insight: efficiency is not just about smaller models. It's about better data design.

Stage 3: Model Experiments

Primary model:

- Qwen 2.5 (7B Instruct): chosen specifically because it runs on 8GB VRAM

Additional APIs tested:

- Together.ai

- Fireworks.ai

- OVH Cloud

Stage 4: Infrastructure Reality

The split architecture became clear.

Local machine (GPU):

- Runs the model via Ollama

- Handles inference where possible

VPS (no GPU):

- Hosts the application

- Manages requests and routing

- Acts as the public interface

Connected via Tailscale.

This creates a practical pattern: keep compute local, expose it through lightweight infrastructure.

Stage 5: Inference Strategy

Three approaches emerged:

- Local inference: most sovereign, limited scale

- API inference: fast, but often not Canadian-hosted

- Hybrid: what actually works

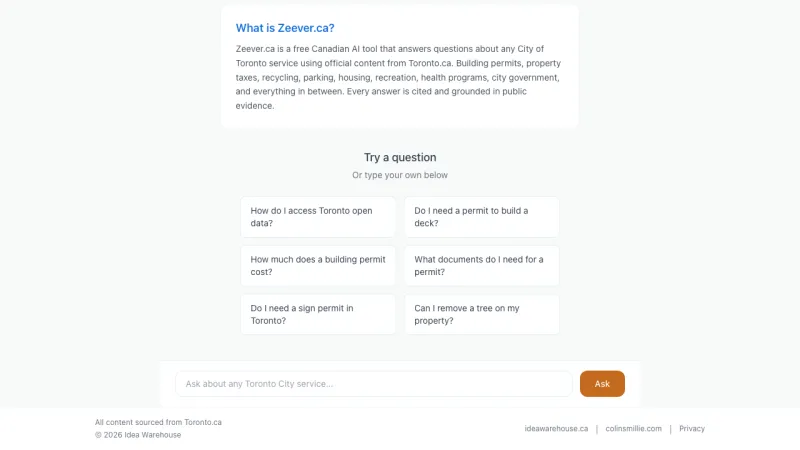

Stage 6: The Working Demo

Zeever now includes a working prototype that:

- Answers questions using Toronto municipal data

- Compares models and approaches

- Measures latency and quality

What This Reveals

1. Sovereign AI is possible on a budget.

You can build meaningful systems with a single GPU, existing infrastructure, and open models.

2. Efficiency is underrated.

The kind of constraint-driven engineering seen in parts of the Chinese ecosystem is a real competitive advantage.

3. Infrastructure is the bottleneck.

Canada still lacks accessible modern GPUs, at-scale infrastructure, and easy access to local compute.

4. Sovereignty is a spectrum.

Most systems end up hybrid. Local plus external. Controlled plus outsourced.

Final Thought

Zeever.ca is not a production system. It's a working proof.

Sovereign AI doesn't start with billion-dollar investments. It starts with using what you already have and pushing it as far as it will go.

The question isn't whether it's possible. It's whether we can make it practical, scalable, and accessible in Canada.

This article originally appeared on colinsmillie.com.

Colin Smillie

Most recently VP Technology at YMCA Canada. Building and shipping real products with AI-assisted development. More about Colin's advisory and executive work at colinsmillie.com.